AI Didn’t Break American Education

It Just Exposed What Was Already Broken.

The core argument in brief:

AI did not create the crisis in American education. It revealed one that has been building for decades. Students who reach for AI are not failing morally; they are responding rationally to a system that has traded the pursuit of wisdom for the production of credentials. The answer is not stricter enforcement. It is a reformed system that gives students something genuinely worth doing.

When a student asks, “What’s the point of school if AI can just do this for me?” that question is not an indictment of artificial intelligence. It is a confession. A confession about what American education has quietly become: a credential-dispensing machine, optimized for outputs, indifferent to learning.

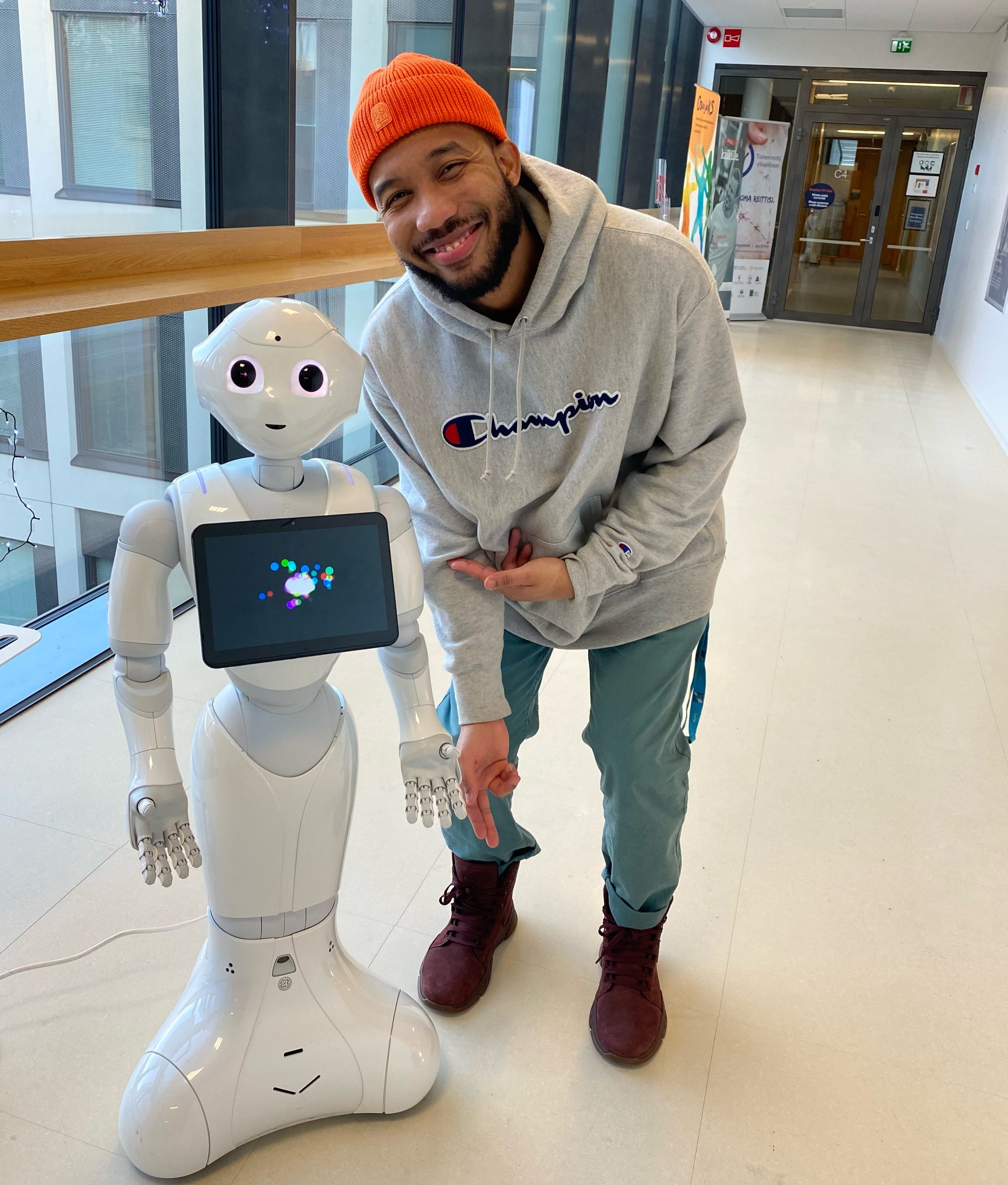

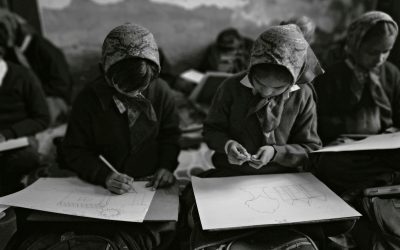

I have taught across five distinct education systems on four continents. I have stood in classrooms aboard the MV Logos Hope humanitarian ship, navigating a British curriculum with students who came from every corner of the world. I have taught in Trinidad and Tobago, where a post-colonial system shaped by British traditions still carries the quiet dignity of education as aspiration. I have worked in Finnish elementary schools and kindergartens in Helsinki, observed educational innovation across more than 45 countries through HundrED, and spent years teaching across Title I and non-Title I schools in Baltimore and Washington, D.C.

From that vantage point, I can say with confidence: artificial intelligence is not ruining American education. AI is simply the latest instrument to make visible what has been deteriorating beneath the surface for decades. The question we should be asking is not “What do we do about AI?” It is “What does our reaction to AI reveal about us?”

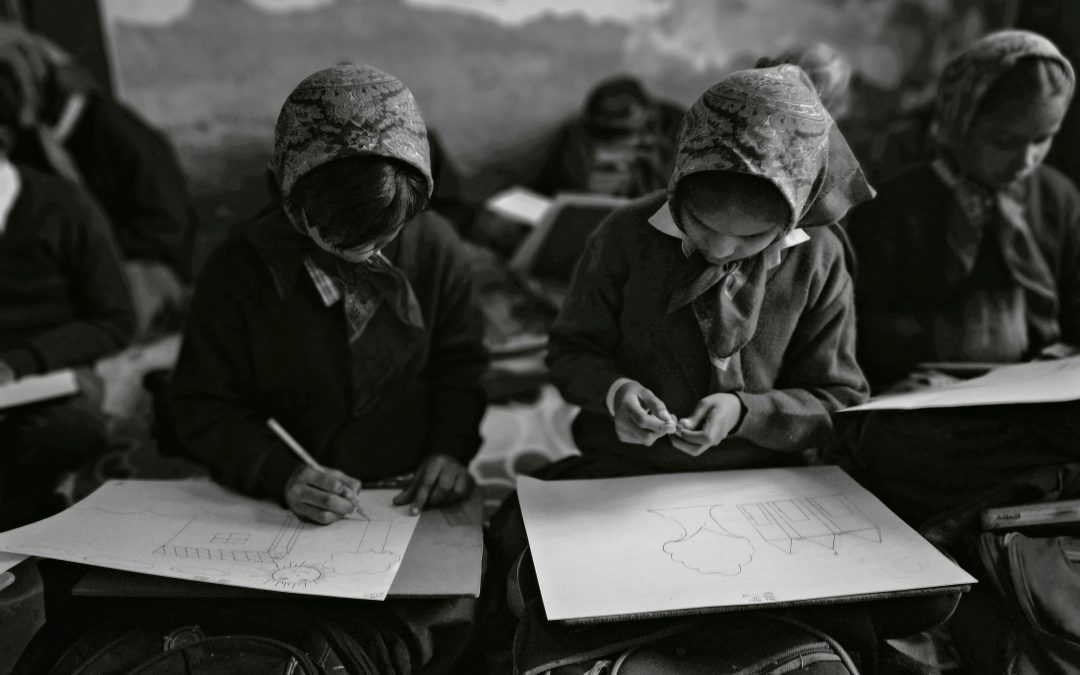

Part One: The Certificate and the Grade

In Finland, education is pursued because learning is considered intrinsically valuable. Finland’s success, it must be said honestly, is not simply a matter of strategies or ideas. It is a matter of the conditions underneath those strategies: high teacher autonomy, deep social trust, relative economic equity, and a cultural consensus around education’s value that American systems have yet to engineer. What Finland reveals is not a set of practices to be imported, but a set of conditions to be built. In Trinidad and Tobago, even within a system shaped by colonial inheritance, there existed a cultural reverence for knowledge, a sense that the educated person was not simply more employable, but more whole. On the MV Logos Hope, I taught young people who had crossed oceans not for a transcript, but because they believed ideas could change the world.

Then I arrived in America.

The first question I consistently heard from students, not the struggling ones, not just the disengaged ones, but across schools and demographics, was some version of: “Is this for a grade?” Four words that contain an entire philosophy of education. An education understood not as a transformation of the self, but as a transaction. You perform; you receive a credential; the credential unlocks an income. Learning, in its deeper sense, is optional collateral damage.

“Is this for a grade?” Four words that contain an entire philosophy of education.”

This is not a criticism of American students. They have been taught, implicitly and explicitly, that this is what school is for. When universities advertise their graduates’ starting salaries before their ideas, when high school counselors measure success in acceptance letters rather than intellectual curiosity, when standardized testing defines what a school is worth, students are not irrational to ask what the grade is. They are reading the room correctly.

What AI has done is introduce a shortcut to the outcome. And when the outcome is the only thing that matters, a shortcut to the outcome is a perfectly rational choice. Students are not the villains here. They are the most honest participants in a system that has been lying to itself for years.

THE NEUROSCIENCE: Your brain is not lazy. It is efficient.

Before we blame students for reaching for AI, we should understand something fundamental about how the human brain works, because the impulse to use AI is not a moral failure. It is a neurological one, and it is universal.

Nobel Prize-winning psychologist Daniel Kahneman spent decades mapping the architecture of human decision-making. In his landmark work Thinking, Fast and Slow (Farrar, Straus and Giroux, 2011), Kahneman identified two cognitive systems that govern how we think and choose. System 1 is fast, automatic, and effortless. It operates beneath conscious awareness, scanning for familiar patterns and routing us toward the most immediately accessible solution. System 2 is slow, deliberate, and effortful: the analytical, reasoning mind that we engage when a problem genuinely demands it. Critically, Kahneman demonstrated that System 2 is, by default, a reluctant participant. The brain treats cognitive effort as a cost, and it is perpetually inclined to let System 1 handle as much as possible.

This is not a design flaw. It is an evolutionary adaptation. Our ancestors could not afford to deliberate endlessly. Conserving mental energy for genuine threats and complex survival decisions was a competitive advantage. The brain learned to take shortcuts, what Kahneman calls heuristics, and to default to the lowest-effort pathway available. This tendency is so deeply embedded that researchers at University College London demonstrated it operates entirely below the level of conscious awareness. In a 2017 study published in the journal eLife, neuroscientists Dr. Nobuhiro Hagura and Professor Patrick Haggard found that when one option requires more effort to execute, participants do not simply choose the easier option. Their brains actually rewire their perception of reality to make the easier option appear to be the correct one. The brain does not merely take the path of least resistance. It tells itself a story about why that path was right all along.

The brain does not just choose the path of least resistance. It rationalizes it. Students reaching for AI are not defying their education. They are obeying their neurology.

Apply this to the classroom and the implications are immediate. When a student is presented with two pathways to the same output, an AI-generated essay versus the slow, cognitively demanding work of research, synthesis, argumentation, and revision, the brain does not evaluate these options neutrally. It experiences the second option as genuinely harder, and therefore as less appealing, less rewarding, and increasingly as less necessary. Kahneman’s research indicates that System 1 governs our decision-making the vast majority of the time. The deliberate override of that system requires motivation, environmental design, and a compelling internal reason to absorb the cognitive cost.

This is the cognitive dissonance students today are genuinely navigating, and it deserves to be named with compassion. We are asking young people whose brains are neurologically optimized for efficiency to voluntarily choose the more cognitively expensive path to an output that a machine can produce instantly. Without an internalized reason to value the process over the product, that is not a battle most brains will win on willpower alone.

The answer is not stricter plagiarism detection. That is a System 2 response to a System 1 problem: a rational solution to a challenge that operates beneath rationality. The answer is to redesign education so that the cognitive effort we ask of students produces something AI cannot replicate: genuine understanding, transferable judgment, and deep learning that rewires the brain itself. When students are building capacities they can feel growing, capacities that belong to them and cannot be outsourced, the calculus changes. The higher-effort path becomes its own reward. That is not a utopian idea. It is what education looked like in the systems I watched work.

Part Two: The Production Tool and the Learning Tool

There is a distinction that has been almost entirely absent from public discourse about AI in education, and it is the most important one: teachers and students use AI for fundamentally different things.

A teacher using AI to generate a lesson plan, draft a rubric, or create differentiated materials is using AI as a production tool. The teacher already possesses the pedagogical knowledge, the understanding of learning objectives, student needs, content standards, and assessment design. AI accelerates the production of a deliverable. The expertise is human; the tool reduces friction.

A student who uses AI to complete an essay assignment is doing something categorically different, or at least, they should be. The purpose of the essay was never the essay. It was the thinking that producing the essay requires: the synthesis of sources, the construction of an argument, the discipline of expressing complex ideas in coherent prose. When AI produces the essay, it produces the artifact without the cognition. The student has the output; they do not have the learning.

Teachers use AI for production. Students should be using AI for learning. These are not the same thing, and conflating them is where the crisis lives.

This is not a call to ban AI from classrooms. It is a call to be clear about what we are actually trying to produce. If we are producing graduates who can operate intelligently in a world saturated with AI, then we need to teach them to use AI critically, creatively, and with judgment. That requires thinking, not outsourcing thinking. The student who learns with AI, interrogating its outputs, stress-testing its reasoning, and pushing it toward nuanced answers, is building exactly the cognitive muscle the future demands. The student who simply submits AI output for a grade is doing neither.

The distinction is not about the tool. It is about whether learning, real, durable, transferable learning, is happening at all. And here, again, the problem predates AI. AI has simply made the absence of learning impossible to ignore.

Part Three: We Have Asked the Wrong Question

The national conversation has fixated on the wrong question. Across faculty meetings, op-ed pages, and policy briefs, the debate has centered on whether students still need to go to college. This question, while not without merit, obscures the more urgent one: Are we preparing students for 2040, or are we still preparing them for 2010?

There is a profound irony in where this question could and should begin. When Benjamin Franklin founded the University of Pennsylvania in 1749, he made a radical argument: that education should be practical, that it should prepare people to create change in the real world, that classical learning must be tethered to civic utility and applied skill. Penn was designed from its inception to produce not just learned individuals, but effective ones: people who could build things, solve problems, and reshape society. Franklin did not design a school optimized for prestige. He designed a school optimized for outcomes that mattered. That founding vision remains one of the most coherent arguments for what higher education should be in an era of accelerating change.

Benjamin Franklin designed Penn to produce people who could build things and change the world. The irony is that we have forgotten why.

The growing popularity of vocational schools, trade programs, and institutions that explicitly market themselves as places preparing students for life, not just a career, is not a rejection of higher education. It is a market signal. Students and families are increasingly paying for learning environments where the connection between education and real-world competence is explicit, not assumed. Benjamin Franklin was pointing us in this direction from the beginning. We are only now beginning to listen.

The question is not whether to go to college. It is what college should be in an era when AI can draft code, synthesize research, produce first-draft content, and automate entire categories of knowledge work. The answer is not that college becomes irrelevant. It is that college must become what it was always at its best: a place where humans learn to think, create, judge, lead, and collaborate at a level that no tool can replicate.

Part Four: We Survived the Calculator

This is not the first time a powerful tool has threatened to make a subject pointless. When calculators became widely available in the 1970s and 80s, the reaction among educators was remarkably similar to the conversation happening now: if machines can compute, why teach computation? The answer the field eventually arrived at was not to abandon mathematics. It was to adjust what mathematics education meant. The curriculum shifted toward mathematical reasoning, conceptual understanding, and problem formulation. The calculator handled arithmetic; humans learned to think mathematically.

We are at an identical inflection point. AI handles information retrieval, pattern synthesis, first-draft generation, and procedural writing. The logical response is not to pretend AI does not exist, and not to surrender cognitive responsibility to it. It is to elevate what we ask of human minds. We should be teaching students to evaluate AI outputs with sophistication, to identify where AI reasoning breaks down, to formulate questions that tools cannot answer, and to bring judgment, ethics, and creativity to problems that resist algorithmic solutions.

These are not new competencies. They are ancient ones: critical thinking, ethical reasoning, creative synthesis, persuasive communication, and the ability to operate with integrity under uncertainty. What is new is the urgency. A student who cannot think critically in the age of AI is not merely underprepared. They are defenseless.

The calculator did not end mathematics. It clarified what mathematics education was actually for. AI will do the same, if we let it.

Part Five: What We Should Be Teaching Instead

If AI can produce the essay, run the calculation, write the code, summarize the research, and generate the lesson plan, then the urgent question is not how to stop students from using it. The urgent question is: what remains that only a human mind, properly developed, can do? The answer is not nothing. It is everything that actually matters.

We need to make a decisive shift in what we treat as foundational in education. For too long, academic skill development has been positioned at the center: subject-specific knowledge, procedural competence, standardized test performance, disciplinary fluency. These are not worthless. But they are increasingly insufficient, and in many cases, they are things AI can now replicate at a passing level on demand. They cannot be the summit of what we are building toward.

What AI cannot replicate, and what we are systematically under-teaching, is the full architecture of humanistic intelligence. This is not a nostalgic argument for the liberal arts. It is a forward-facing argument about what cognitive capacities will be most valuable and most irreplaceable as AI becomes ambient infrastructure.

The goal of education should not be to produce people who can pass tests. It should be to produce people who cannot be fooled, and who have the courage to lead when no algorithm can tell them what is right.

LEADERSHIP AND MORAL REASONING: THE CASE FOR A HUMANISTIC CORE

Leadership cannot be automated. Not because leadership is mystical, but because it is inherently relational, contextual, and ethically loaded. A leader must read a room, weigh competing human interests, make decisions under uncertainty, and take responsibility for outcomes. These capacities are developed through experience, reflection, and through sustained engagement with the great questions of human civilization. Literature, history, philosophy, and ethics are not decorative additions to a curriculum. They are the training ground for judgment.

Philosophical inquiry in particular is grotesquely undervalued in American education. The capacity to ask foundational questions, what is true, what is just, what do we owe each other, what makes a life worth living, is not a luxury afforded to students on their way to careers in finance. It is the very foundation of operating ethically in a world where technical power routinely outruns moral wisdom. We are building students who can use powerful tools without ever developing a coherent framework for deciding whether they should.

EPISTEMOLOGY AS SURVIVAL SKILL: KNOWING WHAT IS REAL

Perhaps the most urgent competency for the AI era is one that sounds almost too simple to take seriously: the ability to determine what is real. We are entering an information environment in which synthetic media, AI-generated text, algorithmically curated feeds, and deliberate disinformation will be indistinguishable from reality for anyone who lacks the tools to interrogate them. This is not a future scenario. It is the present.

Epistemic literacy, the capacity to evaluate sources, trace claims to their origins, identify motivated reasoning, recognize rhetorical manipulation, and maintain calibrated uncertainty in the face of incomplete information, should be treated as a core graduation requirement at every level of education. It is more immediately practical than trigonometry for the overwhelming majority of students, and far more urgently needed.

In Finland, I observed classrooms where students were routinely asked not just what they knew, but how they knew it, and what would change their mind. These are not exotic pedagogical techniques. They are the foundational moves of intellectual integrity. American classrooms, shaped by content-coverage pressures and standardized assessment cultures, rarely have time for them. We cannot afford that anymore.

Epistemic literacy, knowing how to know, is the survival skill of the AI age. A student who cannot distinguish truth from fabrication is not just uninformed. They are a liability to themselves and to democratic society.

ANALYSIS, SYNTHESIS, AND CREATIVE JUDGMENT: WHAT MACHINES CANNOT DO

Analysis is not the same as information retrieval. AI is extraordinarily good at the latter and genuinely limited at the former. Genuine analysis requires a human being to bring prior knowledge, contextual awareness, and evaluative judgment into contact with new material, to decide not just what something says, but what it means, why it matters, and what should be done about it. This capacity is built through years of practice with complex texts, competing arguments, and high-stakes intellectual decisions. It cannot be shortcut.

Creative judgment, the ability to make aesthetic, ethical, and strategic choices in conditions of irreducible ambiguity, is similarly beyond AI’s reach at any level of depth. AI can generate options. It cannot meaningfully choose between them in the way that a human being, with a coherent value system and a stake in the outcome, can. Nurturing this capacity means giving students problems with no clean answers, asking them to defend choices they have made rather than solutions they have computed, and valuing the quality of reasoning over the correctness of outcomes.

This is a profound departure from how most American classrooms currently operate. It is also exactly what the moment demands. We do not need graduates who have memorized content that AI can retrieve in seconds. We need graduates who can walk into a room full of uncertainty, ask the right questions, synthesize what they find, and lead others toward something better. That is a humanistic education. It was always the point. We simply lost the thread.

Conclusion: A Mirror, Not a Wrecking Ball

AI has not broken American education. It has held up a mirror. What the mirror reflects is an education system that has, over decades, quietly traded the pursuit of wisdom for the production of credentials, and in doing so, left students without the internal compass that makes learning meaningful even when no grade is attached.

The students who ask “What’s the point if AI can do it?” deserve a serious answer. The answer is not a lecture about academic integrity. It is a reformed system that gives them something genuinely worth doing: learning that transforms them, that builds their capacity to lead, to think clearly, to distinguish truth from fabrication, to act with moral courage. Learning that no tool can replicate and no algorithm can shortcut.

We do not need to abandon subject knowledge. We need to subordinate it to something larger: the formation of human beings capable of navigating a world their teachers cannot fully imagine. That means recentering education on leadership, philosophical inquiry, epistemic discipline, analytical depth, and creative judgment. It means treating the humanities not as the soft electives on the margins of a serious education, but as the serious core around which everything else is organized.

Benjamin Franklin understood this. The Finns understand it. The question is whether American education, pressured by accountability metrics, credential arms races, and a cultural equation of learning with earning, can find its way back to it before the cost of not doing so becomes impossible to ignore.

The rise of AI has made the hard questions unavoidable. The structural gaps were always there. AI just turned the lights on.

The question now is whether we have the courage to look, and the will to build something worthy of what we see.

About the Author

Jonathan Frederick is the Founder and Managing Director of Staark Educational Solutions, an education systems engineering firm. He holds an M.A. in Changing Education from the University of Helsinki, a Georgetown Certificate in Education Finance, Strategy, and Policy, and is completing an M.S.Ed. in Education Entrepreneurship at Penn GSE with Wharton integration. He has taught across five education systems on four continents, observed 300+ educational innovations through HundrED in Helsinki, and spent seven years teaching in Title I schools across Baltimore and Washington, D.C.

j.frederick@staarkeducation.com